Welcome to Lab Notes! A new creative technology newsletter built for the Brandcenter community.

Creative tech is moving fast, and “try everything” is not a strategy. The goal here is simple: save you time, sharpen your taste, and give you practical moves you can apply immediately.

Each issue will bring you:

- A short POV on what matters right now (and what doesn’t)

- A few curated links of work/tools/whatever’s popping

- A new experiment: one tool or workflow to try

Less hype. More reps. Let’s make some stuff.

– Micah Berry, Brandcenter Associate Director of Technical Training

Consumer Electronics Show: Micah’s POV

CES (the Consumer Electronics Show) is the tech world’s annual “here’s what’s coming” reveal in Las Vegas. It’s not just gadget folks—agencies, platforms, and brand teams go because CES is where companies quietly show their next chapter. I’ve met some of the most interesting people (and weirdly good ideas) there over the years; it’s a full-sensory whirlwind. This year: 148,000+ attendees, 4,100+ exhibitors, and ~1,200 startups.

The loudest theme for 2026 was pretty simple: AI is leaving the demo stage and showing up as real product. Less “look what it can do,” more “this is now built into the tools you’ll actually touch.”

1. Agencies are trying to become the operating layer: One of the most ad-relevant signals at CES was holdcos positioning themselves like platforms. WPP launched an Agent Hub inside WPP Open and described it like an internal “app store” of agents, including more advanced “Super Agents.” Omnicom unveiled the new Omni as an AI-driven marketing intelligence platform. Havas announced AVA, a secure portal meant to centralize access to leading models. Stagwell introduced The Machine, framed as an “agentic operating system” meant to unify workflows across the stack teams already use.

- Student Translation: Expect more briefs, planning, and even early creative development to route through these emerging agent hubs. That means more expectations around governance, provenance, and pricing. Not just make something cool. More like make something cool that survives a workflow, a legal review, and 12 versions.

2. Disney is going vertical, and “premium” is becoming more modular: Disney signaled where premium streaming is heading by announcing a vertical video feed coming to Disney+ later in 2026. And it wasn’t just “here’s a new feed.” Disney also described a video generation tool designed for advertising that versions creative using existing brand assets and guidelines, with automatic adjustments informed by audience engagement.

- Student Translation: “One perfect hero film” is not the only output. The brief is trending toward systems: modular design and copy components built to recombine safely across placements, sizes, and audiences.

3. Performance buying is getting more automated (and your leverage becomes inputs + guardrails): Reddit unveiled Max Campaigns, an AI-powered campaign engine meant to automate parts of targeting, bidding, and optimization inside Reddit Ads Manager. The pitch is basically: “set the goal, feed it good assets, and let the system do more of the machine work.” This signal feels a lot like Google Pomelli, Higgsfield Click-To-Ad, and similar experiments we have been talking about in recent classes. For us, the power in these tools right now is in the implications… not the outputs.

- Student Translation (Especially for Strats + CBMs) More spend will shift toward automated outcome engines. Your leverage becomes:

- Clearer hypotheses (what are we testing?)

- Better creative variety (give the machine options that still feel on-brand)

- Clean measurement and boundaries (what it is allowed to optimize for, and what it is not)

4. Physical AI showed up everywhere: robots are becoming a new interface: CES wasn’t “AI in a box” this year—it was physical AI: intelligence leaving the screen and getting a body. CES programming even used the term outright, and robotics was everywhere on the floor.

- Hyundai/Boston Dynamics rolled out the new humanoid Atlas in a very public way—while reporters noted the stage demo was remote-controlled, with autonomy still the next milestone.

- At the same time, Google DeepMind and Boston Dynamics announced a partnership to bring Gemini-based “foundational intelligence” to robots—aimed at making them context-aware and useful in real factory environments first.

- And NVIDIA basically said: “Cool, here’s the operating system.” They pushed a full stack (models, simulation, tooling) to accelerate robot training and deployment.

- Student Translation: Robots are not just a tech demo anymore. They’re becoming a new kind of screen. That’s right… let it sink in. If a robot is the “face” of a service, then voice, tone, personality, trust cues, and escalation language will become design problems, not engineering trivia.

Three CES takeaways that should stick:

1. Idea protection: When everything gets easier to generate and easier to version, the scarce skill is keeping the concept intact through the escalating rounds of change. Taste. Craft. Storytelling.

2. Modular beats monolithic: Creative will start to look even more like LEGO than it already does, requiring components that remix cleanly across formats (vertical, OOH, social, CTV, robots?!) without breaking the brand.

3. Guardrails are creative: “Cute” AI companions, automated buying, robot interfaces, all of it makes trust and boundaries part of the craft. If you can’t explain what the system is optimizing for (and what it should never optimize for), you’re not directing. You’re hoping.

Signals in the Noise

Adobe post-production suite gets a meaningful speed boost: Adobe rolled out major updates across Premiere and After Effects, highlighted by faster/easier masking (Object Mask + improved shape masks), plus workflow glue like Firefly Boards → Premiere handoff and the newer Frame.io panel in-app. That’s a clean “craft still matters, but the knobs are moving” signal to editors and animators.

Midjourney releases new model: Niji 7, launched January 9, 2026, significantly improves coherency. Making fine details like eyes, reflections, and background elements clearer, while following prompts more closely for specific designs and repeatable characters. It’s more literal (so broad “vibey” prompts may work differently) and introduces a cleaner, flatter look that emphasizes improved line work; see the Niji blog for details.

Apple Siri gets a Google Gemini brain: Apple is reportedly leaning on Google’s Gemini to power a major Siri and Apple Intelligence overhaul, making it more conversational, more contextual, and better at taking actions across apps. The models would run through Apple’s privacy-focused infrastructure, with Apple owning the product layer while Google supplies a lot of the “brain.” Brandcenter takeaway: even Apple is choosing best-available model + tight experience control… a preview of how many brands will build (smart core, differentiated by taste, constraints, and UX).

Universal Music Group (UMG) keeps investing in music AI: UMG is steering AI music toward licensed, artist-approved ecosystems. For example, the Udio legal settlement (Oct 2025), a Splice partnership (Dec 2025), and a fully licensable AI music generation platform (planned for 2026) will be used to build tools that use artists’ own sounds. Expect more label-backed “commercially safe” creation suites and clearer boundaries as this approach spreads.

Distribution shifts + GEO: YouTube is becoming harder to treat as “optional” as AI answer engines increasingly use video as source material, raising the stakes for creators who rely on discovery. Practically, that means chapters, accurate transcripts, and metadata start acting like “GEO” (generative engine optimization) inputs. This makes it easier for both humans and machines to quote, reference, and route viewers to your work. And probably easier to misinform as well.

Google quietly turned Chrome into a creative workspace: A new Gemini side panel, Auto Browse for multi-step web tasks, and “Nano Banana” image editing right inside the browser. The big deal isn’t novelty, it’s friction reduction: research → draft → iterate can now happen in the same window you already live in all day. For students, this is the clearest signal yet that the “creative OS” is shifting from single apps to agentic workflows in the browser.

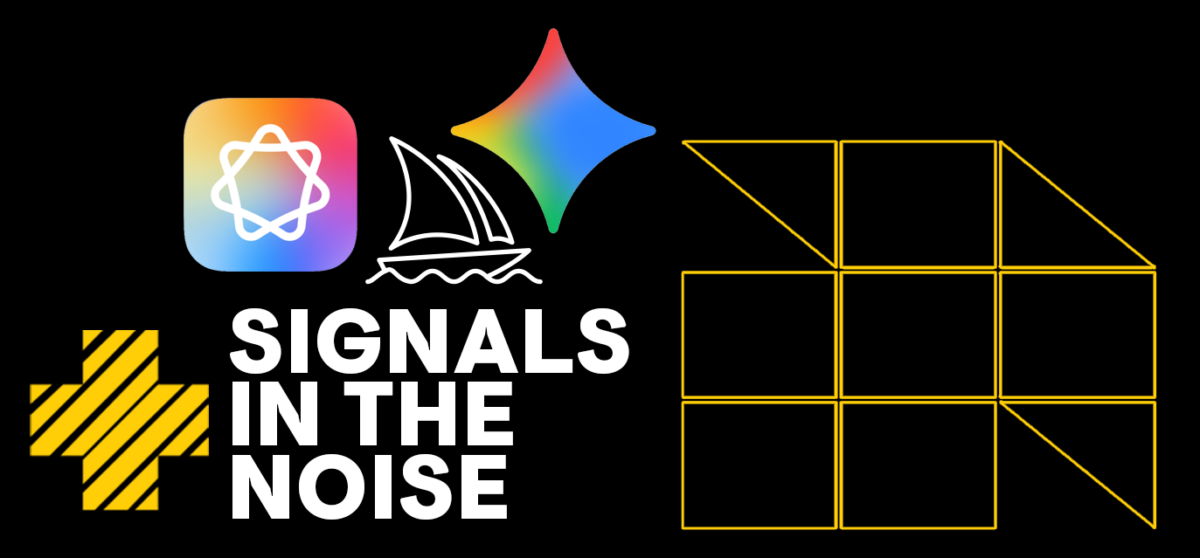

The Toolbench

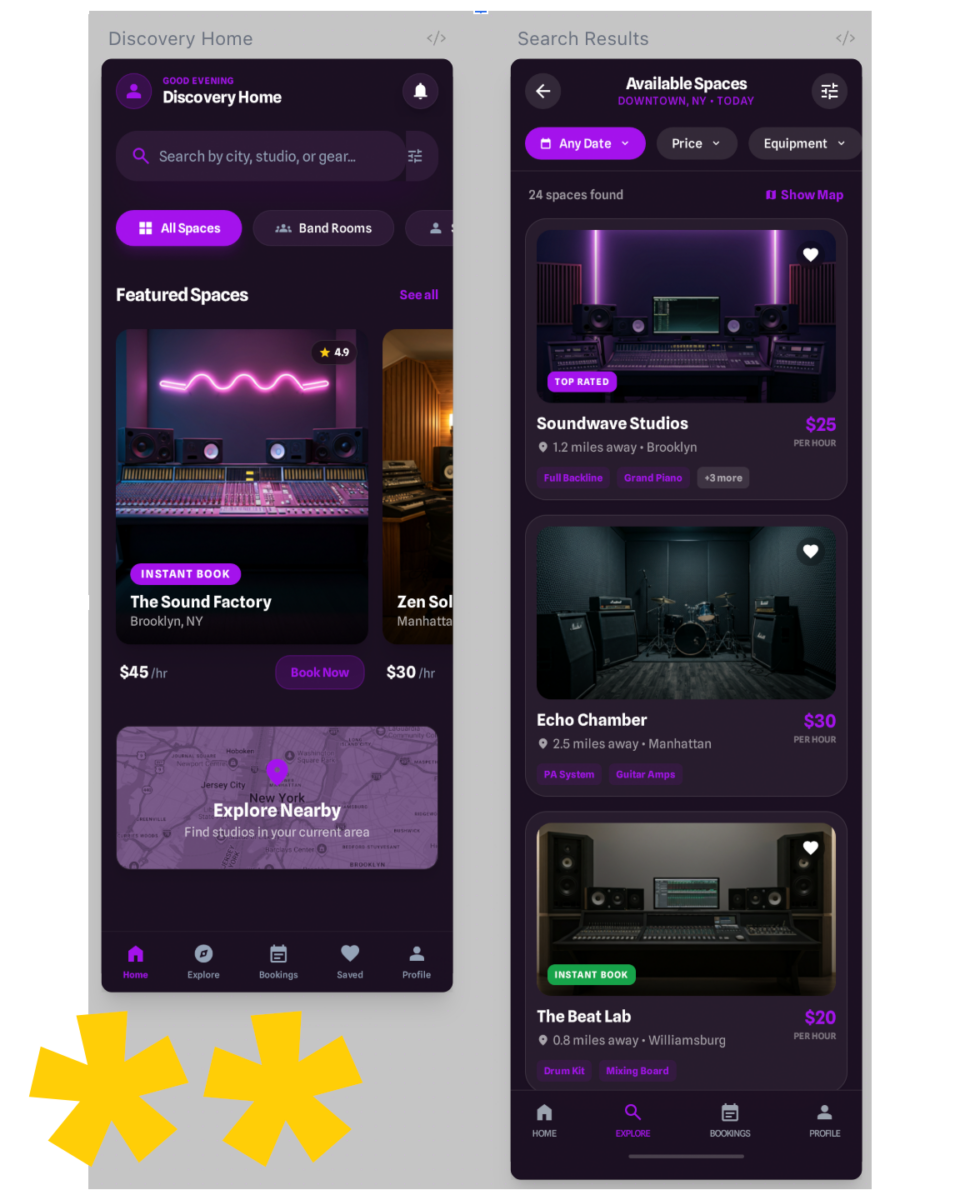

Google Creative Labs: Stitch

Stitch is the most literal version of “don’t start from a blank frame” I’ve seen in UI land (in the same vein as Figma Make, but more accessible). You give it a prompt like “mobile app for booking rehearsal spaces,” (screenshot below) optionally drop in a sketch or reference screen, and it generates a somewhat polished UI plus corresponding front-end code. From there, you iterate conversationally… “make it more minimal,” “increase hierarchy,” “add a pricing screen,”. You can even combine multiple UI screens to make a clickable prototype.

Google Creative Labs positions Stitch as a bridge between design and development: faster ideation for designers, faster starting code for developers, fewer handoff hiccups for everyone caught in the middle. Stitch is powered by Gemini, and as Gemini continues to update its model (including a Gemini 3 upgrade announcement in late 2025) Stitch continues to get more powerful.

Speed-to-variant is a real win here. You can explore multiple layout and style directions quickly (great for concepting and critique). An emerging luxury.

Strong Outputs that hold up as prototypes are another clear win. You don’t just get vibes, you get screens and code you can react to and build on.

Cross-discipline translation where strategists can describe intent, creatives can refine hierarchy, and together land on something that allows devs to start from a scaffolding, not from scratch. I can see the producers smiling from here.

The Creative Labs team works fast (Labs gonna be Labs). Tools change fast, so treat your first run as a quick calibration pass and expect the ground to shift beneath you. To excel in a world where the floor moves, we’ve got to get more nimble. So: exercise.

A PRACTICAL “STITCH PROMPT” EXERCISE: Copy/paste and swap the brackets…

- Prompt: “Design a [mobile/web] UI for [who] to [do what]”

- Brand attributes: [3–5 adjectives]

- Visual direction: [type style + references: ‘editorial’, ‘brutalist’, ‘Apple-like’, etc.]

- Primary flow: [screen 1 → screen 2 → screen 3]

- Key components: [search, filters, cards, checkout, calendar, etc.]

- Accessibility: [high contrast / large type / clear tap targets]

Why this works: it forces intent, hierarchy, and flow. You should bring the product strategy and basic design idea. Stitch can help quickly make them into a UI prototype.

Play around. Make something. Share it with some people. Try again.

Want to Keep Reading?

Creative AI Is Becoming a Stack. Photoshop Just Confirmed It. Adobe didn’t just add options. It signaled a new operating system for creative production.

The Creative Industry Isn’t Dying. It’s Updating its OS. When execution gets cheaper, judgment gets more valuable.

Questions?

Reach out to us at [email protected]